Customizing the Insights Scope

The Sauce Labs Insights section allows you to view your test data from a variety of different perspectives to ensure that you can see both the big picture of your testing effectiveness and the individual slices of test data that help you pinpoint failure causes and fix them.

Sauce Labs has also published an Insights API so you can build a custom dashboard with views that are specific to your test strategy.

Touring the Interface

Access the Insights menu from the left-side navigation menu of our web app. From here, you have access to the six primary views of your test data.

| Page | Description |

|---|---|

| Job Overview | Shows a variety of views of the data related to all the tests executed that match the specified filter criteria broken into two focus tabs. The Overview tab shows test case health snapshot, test summary, and test breakdowns (browser, OS, Frameworks, Devices) and you can filter for Virtual Cloud (VDC) or Real Devices (RDC). |

| Errors | The Errors page shows the total number of errors across the execution of all tests in the filter for either Virtual Cloud or Real Devices, along with a graph depicting the error rate over time. Below, there is a breakdown of each error with the number of times it occurred in the period, and you can click any of them to see the list of tests in which it occurred. |

| Job History | Shows a visual snapshot of the results for a specific test over time. See the Test History page for specific views and capabilities descriptions. |

| Trends | Shows graphical visualizations of all tests. Applying filters to this view makes comparing test outcomes for different variables, such as the device browser version, manageable. See the Trends page for detailed documentation. |

| Coverage | Org Admin Only Shows the breakdown of browsers and devices against which you've run tests, giving you an idea of how comprehensively you are testing across different platforms so you can align your test strategy with your own market usage data. |

| VM Concurrency | Shows how many VM instances are in use simultaneously at any given time. See Team Concurrency for information about how concurrency is allocated. |

| Failure Analysis | Exposes the results of the Sauce Labs machine learning algorithms that comb through every command run in every test and each error thrown in those tests to determine emerging patterns. See the Failure Analysis page for detailed documentation. |

Using Filters to Adjust the Scope of Your Data

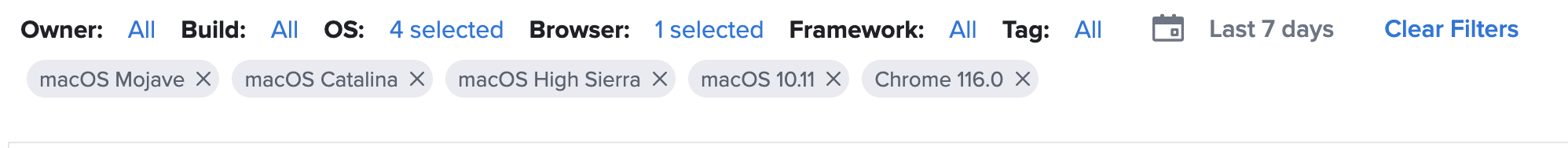

At the top of each page in the Insights interface is a set of properties by which you can filter the data shown on that page. As you specify values for each property, the setting is shown below the filters bar and the data on the page refreshes to reflect the applied filters.

The following table describes each of the filters available for customizing your test insights data.

| Filter | Description |

|---|---|

| Owner | Show data for tests owned by one or more individuals, teams, or your whole organization. Depending on your access and role in your organization or team, you will see different options here. |

| Build | Show data for tests that are included in specified builds, or groups of related tests. |

| OS | Show data for tests run on the specified operating systems. The menu lists each OS version separately. |

| Browser | VDC Only Show data for tests run on the specified browsers. The menu lists each browser version separately so you can compare versions of the same browser. |

| Device | RDC Only Show data for tests run on a specified device. The menu lists each device version separately so you can compare versions of the same device. |

| Device Group | RDC Only Show data for tests run on a Private or Public device. |

| Framework | Show data for tests run on specific framework. |

| Tag | Show data for tests or builds that are categorized using the specified tag(s).The Tag filter is not available for all sections of the Insights interface. |

| Time Period | Show data for tests that were executed in the specified time period. In the selection modal, choose the Relative tab to set a duration up through the current day and time. Choose the Absolute tab to set a specific window between start and end dates. Times and dates are in the local time of the logged-in user. If you do not see the data you expect in that duration, consider whether the test was executed in a different time zone. |

Check out the individual sections of the Insights feature documentation for use cases illustrating how filtering your data view can help you pinpoint errors or inefficiencies in your tests so you can be confident that your app is functioning optimally.